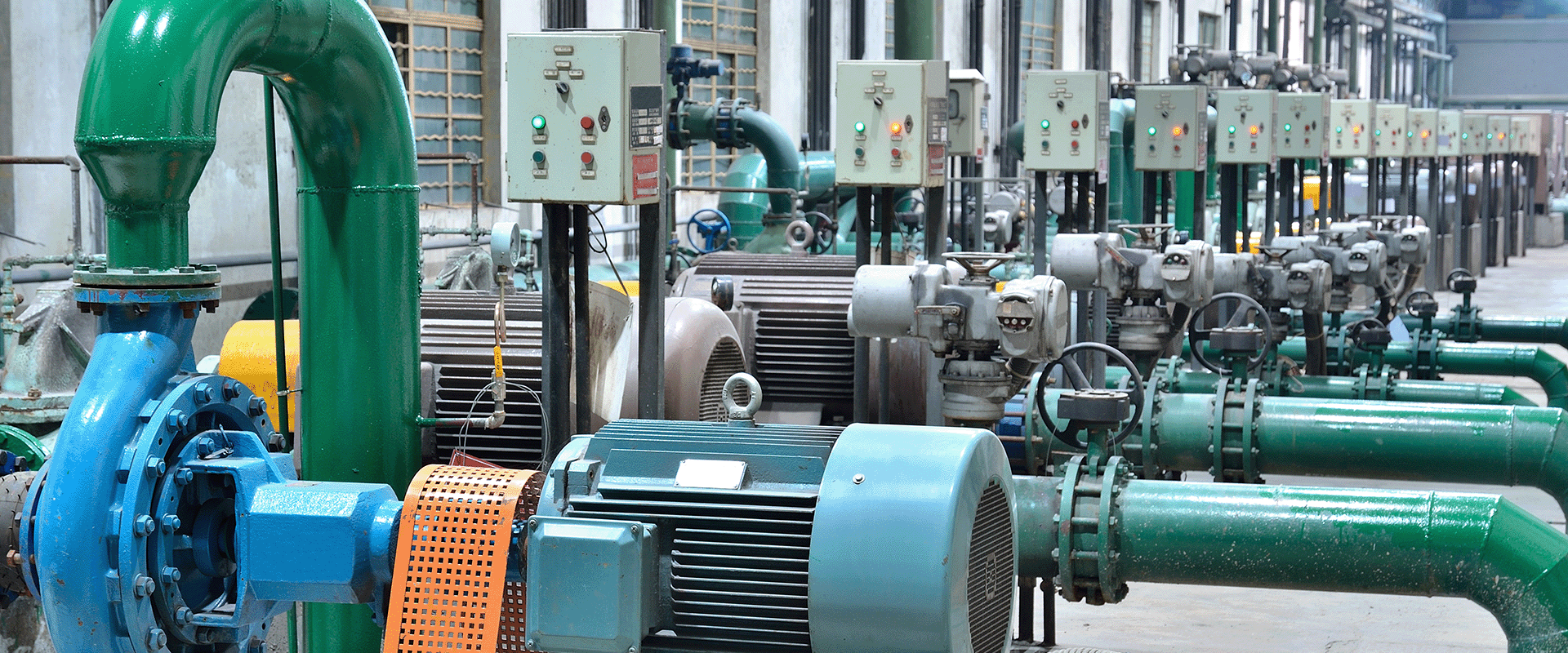

From extraction and treatment to pumping and distribution, nearly every stage of the water cycle uses energy. In fact, energy costs can account for up to 40% of a water utility’s operating budget, with pumping alone comprising the lion’s share...

by Neda Simeonova